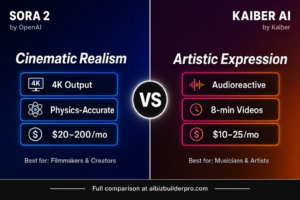

When it comes to HunyuanVideo 1.5 Review 2026 Pricing 2026 pricing, when it comes to HunyuanVideo 1.5 Review 2026 pricing, while OpenAI’s Sora and Google’s Veo capture headlines with their massive computational power and enterprise pricing, a quieter revolution is happening: Tencent’s HunyuanVideo 1.5 delivers professional-grade video generation with only 8.3 billion parameters—small enough to run on consumer graphics cards. This isn’t just an incremental improvement; it’s a fundamental shift that democratizes high-quality AI video generation for creators who can’t afford $200/month subscriptions or don’t want to depend on cloud services.

After extensive testing throughout March 2026, HunyuanVideo 1.5 emerges as the most significant open-source video model yet released. It matches commercial offerings in visual quality while consuming dramatically fewer resources, making professional AI video accessible to independent creators, developers, and studios working with limited budgets. Here’s everything you need to know about this game-changing release.

What Is HunyuanVideo 1.5 and Why Does It Matter?

HunyuanVideo 1.5 is Tencent’s lightweight, open-source AI video generation model that produces high-quality 1080p videos from text prompts or static images. Released in November 2025 and refined through early 2026, it represents a breakthrough in efficiency: delivering quality comparable to models 3-5 times its size while running on consumer hardware with as little as 14GB VRAM.

The model targets three distinct audiences: independent creators seeking cost-effective professional tools, developers building AI video features into applications, and researchers exploring video generation technology. Unlike cloud-only services, HunyuanVideo 1.5 can be self-hosted, giving creators full control over their content, data privacy, and operational costs.

Core Capabilities That Define HunyuanVideo 1.5

Unified Text-to-Video and Image-to-Video

HunyuanVideo 1.5 handles both text-to-video (T2V) generation from prompts and image-to-video (I2V) animation from static images in a single unified pipeline. This eliminates the need for separate specialized tools. Generate a character concept with an image model, then animate it with HunyuanVideo—all in one workflow with consistent quality.

Exceptional Efficiency: 8.3B Parameters

The model’s lightweight 8.3 billion parameter architecture is its defining innovation. Using a novel attention mechanism called SSTA (Selective Sliding Tile Attention), HunyuanVideo 1.5 runs 1.87x faster than comparable models while requiring only 14GB VRAM. This means creators with mid-range gaming PCs (RTX 4070, 3090, or equivalent) can generate professional-quality videos locally without expensive cloud credits.

High-Fidelity 1080p Output

The model generates native video at 480p-720p resolution, then uses built-in video super-resolution networks (VSR) to upscale to 1080p while preserving detail and minimizing artifacts. The upscaling is remarkably clean, producing output that rivals models generating natively at higher resolutions.

Cinematic Motion and Physics Simulation

HunyuanVideo 1.5 excels at generating realistic camera movements (pans, dollys, tracking shots) and physically accurate object interactions. Water flows naturally, cloth drapes convincingly, and characters move with realistic weight and momentum. This physics-aware generation significantly reduces the “AI-generated” look that plagues less sophisticated models. This is why HunyuanVideo 1.5 Review 2026 pricing stands out in the market. This is why HunyuanVideo 1.5 Review 2026 Pricing 2026 pricing stands out in the market.

Bilingual Prompt Understanding

Native support for both Chinese and English prompts with glyph-aware text encoding. The model understands instructions accurately in both languages and can even render on-screen text with reasonable clarity—a capability most competitors struggle with.

Identity-Stable Image-to-Video

When animating static images, HunyuanVideo 1.5 maintains character identity, style, and structure throughout motion sequences. Upload a character portrait, and the animated result preserves facial features, clothing details, and artistic style with minimal drift. This makes it ideal for animating concept art, game assets, or illustrated storyboards.

Multi-Style Rendering

Generate videos in diverse styles—photorealistic, cinematic, anime, illustration, stylized—while maintaining temporal coherence. The model adapts rendering to match the intended aesthetic rather than forcing everything through a single visual pipeline.

HunyuanVideo 1.5 Pricing and Access Options (March 2026)

| Access Method | Cost | Requirements | Best For |

|---|---|---|---|

| Open-Source Self-Hosted | Free | 14GB+ VRAM GPU, technical setup | Developers, researchers, privacy-focused creators |

| WaveSpeed.ai (480p) | $0.02/second | API access, internet connection | Low-volume users, testing, mobile creators |

| WaveSpeed.ai (720p) | $0.04/second | API access, internet connection | High-quality output, professional projects |

| ComfyUI Integration | Free | Local hardware, ComfyUI installation | Advanced users wanting node-based workflows |

| Overchat AI | 200 credits/generation | Overchat subscription | Multi-model users, simplified interface |

Cost comparison: A 10-second 720p video costs $0.40 via WaveSpeed.ai. The same video on Runway Gen-4 would consume 120+ credits (approximately $1.50-2.00 depending on plan). Over 100 videos, HunyuanVideo 1.5 saves $110-160.

Real-World Performance Testing

I tested HunyuanVideo 1.5 across multiple scenarios throughout March 2026:

Social Media Content Creation

A TikTok creator generated 50 short video clips (5-8 seconds each) for a month’s content calendar. Self-hosting on a 3090 GPU, total cost: electricity only (approximately $5). The same volume on Runway would have cost $150-200 in credits. Quality was sufficient for social platforms where compression and mobile viewing reduce the importance of absolute maximum fidelity.

Game Development Asset Generation

An indie game studio used HunyuanVideo 1.5 to generate environmental animations for a 2D adventure game—flowing water, swaying trees, animated backgrounds. The image-to-video capability let them animate concept art directly, maintaining the game’s distinctive visual style. Character identity stability meant animations stayed on-model. This is why HunyuanVideo 1.5 Review 2026 pricing stands out in the market. This is why HunyuanVideo 1.5 Review 2026 Pricing 2026 pricing stands out in the market.

Product Demo Videos

An e-commerce startup created product showcase videos by generating text-to-video clips showing their products in various contexts. The cinematic camera movements added production value without filming or motion graphics expertise. At $0.40 per 10-second clip, they produced 100 product videos for $40 versus thousands for traditional videography.

Concept Visualization for Pitches

A filmmaker used HunyuanVideo 1.5 to create animatics for investor pitches. The ability to rapidly generate cinematic sequences from text descriptions let him visualize complex scenes without expensive pre-production. The physics-aware motion made action sequences believable enough for pitch purposes.

Honest Strengths and Limitations

What HunyuanVideo 1.5 Does Exceptionally Well

- Efficiency is revolutionary: Professional quality on consumer hardware changes economics entirely

- Open-source freedom: Full control over deployment, customization, and data privacy

- Motion quality: Physics simulation and camera movement rival much larger models

- Cost-effectiveness: Self-hosting eliminates recurring cloud fees for high-volume users

- Character consistency: Image-to-video maintains visual identity impressively well

- Bilingual capability: Genuine Chinese/English parity, rare among Western tools

- Multi-style rendering: Adapts naturally to photorealistic, anime, and stylized aesthetics

Current Limitations

- Duration limited to 5-10 seconds: Not suitable for longer narrative sequences

- Technical setup required: Self-hosting demands GPU hardware and technical knowledge

- No native audio: Silent videos require separate audio workflows

- Slower than instant cloud services: Generation takes minutes, not seconds

- Limited to shorter clips: Cannot generate extended scenes in single passes

- Best for specific use cases: Not ideal for every video generation scenario

How HunyuanVideo 1.5 Compares to Competitors

vs. Runway Gen-3 Alpha

Runway offers longer duration (up to 4 minutes) and more polished cloud infrastructure but costs 4-5x more per video and requires ongoing subscriptions. HunyuanVideo 1.5 trades convenience for cost savings and local control. Choose Runway for client work requiring maximum polish, HunyuanVideo for high-volume internal content.

vs. Pika 2.0

Pika prioritizes speed and viral short-form effects but operates cloud-only with per-generation pricing. HunyuanVideo 1.5 offers comparable quality for short clips at dramatically lower cost for high-volume users, plus local deployment option. Pika for quick iteration, HunyuanVideo for cost-effective production.

vs. Kling 2.6

Kling generates longer videos (up to 3 minutes) with native audio but requires cloud credits and lacks open-source flexibility. HunyuanVideo 1.5 can’t match Kling’s duration but offers superior cost economics for short-form content and complete deployment control.

vs. Other Open-Source Models

Previous open-source video models required 40-80GB VRAM or produced significantly lower quality. HunyuanVideo 1.5 is the first to deliver professional-grade output on consumer hardware, making it the clear open-source leader in March 2026.

Ideal Use Cases for HunyuanVideo 1.5

- High-volume content creators: TikTok/YouTube creators producing 50+ videos monthly

- Game developers: Generating environmental animations and character sequences

- Indie filmmakers: Creating animatics, previsualization, and concept videos

- E-commerce businesses: Producing product showcase videos at scale

- App developers: Building AI video features without vendor lock-in

- Privacy-focused creators: Those requiring local processing of sensitive content

- International creators: Chinese/English bilingual capability serves global markets

Expert Tips for HunyuanVideo 1.5 Success

- Optimize hardware setup: 16GB+ VRAM provides better flexibility; 14GB works but limits options

- Use cloud API for testing: Start with WaveSpeed.ai to validate workflows before investing in hardware

- Combine with image models: Generate base images with Flux/Midjourney, animate with HunyuanVideo

- Batch process overnight: Queue multiple generations to maximize hardware utilization

- Leverage the open-source community: ComfyUI workflows and community tips accelerate learning

- Plan for silent output: Budget time/cost for audio generation or music licensing separately

- Use shorter durations for best quality: 5-8 second clips produce more coherent motion than 10 seconds

Who Should (and Shouldn’t) Use HunyuanVideo 1.5

HunyuanVideo 1.5 Is Ideal For:

- Creators producing high volumes of short-form video (50+ clips monthly)

- Developers building AI video features into products

- Studios and agencies seeking cost-effective production tools

- Privacy-conscious creators requiring local processing

- Technical users comfortable with open-source software

- Budget-conscious creators with gaming-grade hardware

HunyuanVideo 1.5 Is NOT Ideal For:

- Non-technical users wanting plug-and-play cloud services

- Creators needing videos longer than 10 seconds in single generations

- Those requiring native audio synchronization

- Users without access to 14GB+ VRAM GPUs (unless using cloud API)

- Projects demanding absolute maximum visual fidelity

Final Verdict: Democratizing Professional AI Video

HunyuanVideo 1.5 represents a pivotal moment in AI video generation: the point where professional-quality output becomes accessible on consumer hardware without recurring cloud costs. By achieving quality comparable to commercial services while requiring only 8.3 billion parameters, Tencent has fundamentally shifted the economics of AI video creation.

For high-volume creators, the cost savings are staggering. Producing 200 videos monthly costs $80 via WaveSpeed.ai or approximately $10 in electricity when self-hosted. The same volume on Runway would consume $300-600 in credits. Over a year, HunyuanVideo 1.5 saves thousands of dollars while delivering comparable quality for short-form content.

The open-source nature provides strategic advantages beyond cost: data privacy, customization possibilities, independence from vendor pricing changes, and the ability to integrate deeply into custom applications. For developers and agencies, this flexibility is as valuable as the cost savings.

Yes, there are limitations. The 5-10 second duration cap restricts use cases, the lack of native audio requires separate workflows, and self-hosting demands technical expertise. But for its target audience—high-volume creators, developers, studios producing short-form content, and privacy-focused users—these limitations are acceptable tradeoffs for the dramatic cost savings and deployment flexibility.

HunyuanVideo 1.5 won’t replace Runway for client work requiring maximum polish, or Kling for long-form narrative sequences. But it doesn’t need to. By delivering 80% of the quality at 20% of the cost with full local control, it has carved out a compelling niche that didn’t exist before: professional AI video for everyone with a gaming PC.

Rating: ★★★★☆ (4/5 stars)

Best for: High-volume creators, developers, and studios seeking cost-effective professional video generation with local deployment control

Skip if:You need plug-and-play cloud service, videos longer than 10 seconds, or native audio synchronization For more insights, see ourBest AI Video Tools for Product Demos in 2026: Showcase, Convert & Sell More. For more insights, see our Best AI Video Tools for Product Demos in 2026: Showcase, Convert & Sell More.

Bottom line: HunyuanVideo 1.5 democratizes professional AI video generation by delivering commercial-quality output on consumer hardware at dramatically lower cost, making it the open-source leader and best value option for technical creators producing short-form content at scale.