When it comes to alibaba ai video tools in 2026, alibaba has emerged as a major force in AI video generation with a comprehensive suite of tools that rivals—and in some cases surpasses—competitors like Runway, HeyGen, and OpenAI’s Sora. If you’re a content creator, marketer, or developer looking for powerful AI video tools in 2026, Alibaba’s ecosystem deserves serious attention.

This guide covers everything you need to know about Alibaba’s flagship video AI tools: Wan 2.7 (the latest open-source video generation model), Wan 2.6 (reference-to-video specialist), EMO (portrait animation), and supporting tools like Qwen3.5-Omni. We’ll break down features, pricing, use cases, and how they stack up against the competition.

What is Wan 2.7? The Latest AI Video Model from Alibaba

Wan 2.7 launched in April 2026 as Alibaba’s most advanced AI video generation model. Built on a 27-billion-parameter Mixture-of-Experts (MoE) architecture with 14 billion active parameters per inference, it’s a powerhouse that competes directly with Sora 2, Runway Gen-4.5, and Kling AI.

Visit wan2.video to access the official platform.

Key Features of Wan 2.7

What sets Wan 2.7 apart is its unified four-mode architecture:

- Text-to-Video (T2V): Generate videos from text prompts with camera control and negative prompts (2-15 seconds)

- Image-to-Video (I2V): Animate static images with First+Last Frame control and 9-Grid storyboard synthesis

- Reference-to-Video (R2V): Lock character appearance AND voice across scenes using up to 5 mixed inputs

- VideoEdit: Instruction-based video editing with natural language commands like “change the jacket from red to navy”

Technical Specifications

- Resolution: 720P or 1080P

- Frame Rate: 30fps

- Max Duration: 15 seconds per generation

- Open Source: Apache 2.0 license (weights on HuggingFace)

- Hardware: 5B variant runs on RTX 4090 with 8GB VRAM

- GitHub Stars: 15,700+ (54K monthly HuggingFace downloads)

Standout Capabilities

First-and-Last-Frame Control: Define both the starting and ending frames, and Wan 2.7 generates everything in between. This is crucial for precise transitions and shot composition—something competitors like Runway and Sora don’t offer natively.

Voice Reference Cloning:In R2V mode, maintain both character visual identity AND vocal consistency across multiple scenes without LoRA training. HeyGen charges $29/month minimum for voice cloning; Wan 2.7 does it at no cost if self-hosted. This is why alibaba ai video tools in 2026 stands out in the market.

No Content Restrictions: Unlike Sora (which blocks real faces in photos) or Seedance (aggressive face filters), Wan 2.7 has zero content moderation filters.

Wan 2.7 Pros & Cons

| Pros | Cons |

|---|---|

| ✓ Fully open-source (Apache 2.0) | ✗ Lower raw visual fidelity than Seedance 2 |

| ✓ First+Last Frame control (unique) | ✗ 15-second max duration vs Kling’s 3 minutes |

| ✓ Voice cloning in R2V mode | ✗ Requires technical setup for self-hosting |

| ✓ Credits never expire (cloud platform) | ✗ Video URLs expire in 24 hours (API) |

| ✓ Zero content restrictions | ✗ Not yet rated on Artificial Analysis arena |

| ✓ Four unified modes (T2V/I2V/R2V/Edit) |

Wan 2.6: China’s First Reference-to-Video Model

Released in December 2025, Wan 2.6 introduced groundbreaking Reference-to-Video (R2V) capabilities. Upload a character reference video with appearance and voice, then generate new scenes starring that same character with full audiovisual consistency.

Key Features

- Multi-shot storytelling: Generate wide shots → medium shots → close-ups from a single prompt

- Resolution: 1080P at 24fps, up to 15 seconds

- Audio synchronization: Native AV sync with realistic lip-sync (not post-production)

- Multilingual: Prompts in English, Chinese, Spanish, French; multilingual dialogue output

- Reference frames: Up to 150 frames for character consistency

Where to Access Wan 2.6

- Alibaba Cloud Model Studio (API)

- wan2.video official website

- Third-party platforms: invideo, Cliprise, alici.ai, runware.ai, aimlapi.com

Wan 2.6 Pros & Cons

| Pros | Cons |

|---|---|

| ✓ China’s first R2V model | ✗ Superseded by Wan 2.7 (April 2026) |

| ✓ Multi-shot storytelling from one prompt | ✗ 15-second max (same as 2.7) |

| ✓ 30-70% cheaper API cost than Sora/Kling | ✗ Fewer creative controls than 2.7 |

| ✓ Native multilingual audio-visual sync | ✗ API-only (no open-source weights) |

| ✓ Up to 150 reference frames |

EMO (Emote Portrait Alive): AI Talking Heads from a Single Photo

EMO is Alibaba’s portrait animation system that transforms a single static photo into a lifelike talking-head video with synchronized lip-sync and facial expressions. Just provide one portrait image and an audio file (voice or singing), and EMO generates a continuous video matching the audio length.

How EMO Works

- Face Detection: emo-detect-v1 identifies face bounding box and animation region

- Video Generation: emo-v1 generates animated video from portrait + audio + bounding boxes

- Output: 512×512 (1:1) or 512×704 (3:4) video matching audio duration

EMO Pricing (Pay-as-You-Go)

| Service | Price | QPS Limit |

|---|---|---|

| emo-detect-v1 | $0.000574/image | 5 |

| emo-v1 (1:1 video) | $0.011469/second | 1 concurrent job |

| emo-v1 (3:4 video) | $0.022937/second | 1 concurrent job |

CRITICAL: EMO is only available in the China (Beijing) region on Alibaba Cloud DashScope. API keys from Singapore or other regions will NOT work.

EMO Use Cases

- Digital spokespeople: Animate brand mascot photos for product announcements

- Social media content: Create talking-head videos from a single photo

- Education: Animate historical figures or teachers for e-learning

- Localization: Generate lip-synced videos in multiple languages from one portrait

- Corporate training: Animated trainer personas from employee headshots

EMO Pros & Cons

| Pros | Cons |

|---|---|

| ✓ Extremely affordable ($0.011/second) | ✗ China Beijing region ONLY |

| ✓ Single photo input (no video needed) | ✗ Only 1 concurrent job at a time |

| ✓ Perfect lip-sync and facial expressions | ✗ Limited to portrait/talking-head format |

| ✓ Unlimited video duration (matches audio) | ✗ Requires clear human voice audio |

| ✓ 97% cheaper than HeyGen ($29/mo min) | ✗ API-only (no web interface) |

Other Alibaba AI Video Tools

Qwen3.5-Omni (March 2026)

A native multimodal AI model with powerful video understanding capabilities:

- Context window: 256,000 tokens (processes up to 10 hours of audio or 400 seconds of 720p video)

- Speech recognition: 74 languages (including 39 Chinese dialects)

- Speech synthesis: 29 languages

- Key feature: Script-level video captioning with timestamps and speaker mapping

- Access: Alibaba Cloud Model Studio Offline API and Realtime API

Wan Image-Pro

- “Thinking Mode” with Chain-of-Thought composition planning

- 4K output support

- 12-language text rendering in images

- Up to 9 reference images for style control

Animate Anyone

Full-body animation from source motion video applied to portrait image (DashScope, cn-beijing only)

LingMou

Template-based digital human broadcast videos for enterprise use . For more insights, see our Waymark AI Video Tool Review 2026: Script-to-TV-Ad in Minutes.

Alibaba AI Video Pricing: Complete Breakdown

Official Wan Platform Subscription

| Plan | Monthly Cost | Credits/Month | Videos/Month |

|---|---|---|---|

| Pro | $5/mo (annual) | 300 | ~60 videos |

| Premium | $20/mo (annual) | 1,200 | ~240 videos |

Critical differentiator: Wan credits NEVER expire—unlike Runway, Kling, Pika, and Sora which all reset monthly.

Third-Party API Pricing (Wan 2.6)

| Platform | 720P | 1080P |

|---|---|---|

| PiAPI.ai | $0.08/second | $0.12/second |

| WaveSpeed AI | $0.10/second | $0.15/second |

Per-clip cost at PiAPI pricing: 5 seconds = $0.40 (720P) / $0.60 (1080P); 15 seconds = $1.20 / $1.80

Open-Source / Free Option

Download Wan 2.1, 2.2, or 2.7 weights from HuggingFace under Apache 2.0 license. Run locally at zero per-generation cost (you only pay for compute). Hardware requirements:

- 1.3B model: RTX 3070/4060 (8GB VRAM)

- 5B model: RTX 4090

- 14B model: 24GB+ VRAM (RTX 3090/4090)

Use Cases: How Creators and Marketers Use Alibaba AI Video Tools

Social Media Content

Best tool: Wan 2.6 for multi-shot social clips (TikTok, Reels, Shorts)

- One prompt generates beginning, middle, and end in 10-15 seconds

- Native 9:16 vertical format support

- No editing skills required—the model handles continuity

- UGC-style video production at scale

Marketing and Advertising

Best tools: Wan 2.7 R2V (spokesperson), EMO (talking-head)

- Animate product photos into 360° demo videos

- Create character-consistent product videos with locked visual identity and voice

- EMO: animate brand mascot photo into product announcements at $0.011/second

- Rapid A/B concept video testing before production spend

Education and Training

Best tools: EMO (talking heads), Wan 2.7 R2V (consistent instructors)

- Animate historical figures or subject-matter experts from a single photo

- Generate dynamic explainer videos and animated lesson content

- Produce localized education videos in different languages

- Consistent animated trainer personas across multiple training modules

Corporate Training

- Create engaging presenter videos from employee headshots with voice-over

- LingMou: template-based broadcaster videos for compliance training

- Qwen3.5-Omni: auto-generate structured lesson outlines from video content

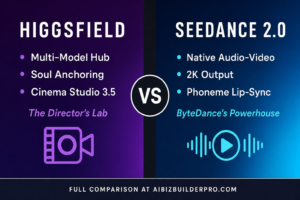

Alibaba vs. Competitors: Complete Comparison Table

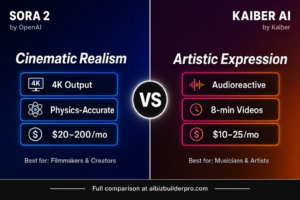

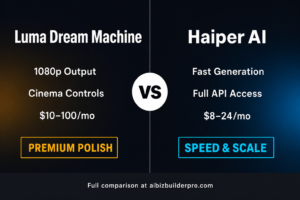

| Feature | Wan 2.7 | HeyGen | Runway Gen-4.5 | Kling AI | Sora 2 | Seedance 2 |

|---|---|---|---|---|---|---|

| Max Resolution | 1080P | 1080P | 1080P | Up to 4K | 1080P | 1080P |

| Max Duration | 15 sec | Unlimited (avatar) | 16 sec | 3 min (chain) | 25 sec | 20 sec |

| Open Source | YES (Apache 2.0) | No | No | No | No | No |

| Entry Price | $5/mo (Pro) | $29/mo | $12/mo | $6.99/mo | $20/mo | Free tier |

| Credits Expire? | NEVER | N/A | Monthly | Monthly | Monthly | Daily reset |

| Voice Cloning | Yes (R2V) | Yes (premium) | No | No | No | No |

| First+Last Frame | YES | No | No | Partial | No | No |

| Content Restrictions | NONE | Strict | Moderate | Moderate | Strict | Moderate |

| EU Access | Global | Global | Global | Global | NOT in EU | Global |

| Best For | Creative freedom + open-source | Avatar/multilingual | Cinematic quality | Best value/volume | ChatGPT users | Raw visual fidelity |

Quick Decision Guide

- Best cinematic quality: Runway Gen-4.5 (#1 benchmark, full editing suite)

- Best for talking-head avatars: HeyGen ($29/mo, 100+ avatars, 130+ languages)

- Best value cost-per-clip: Kling AI ($6.99/mo, ~$0.09/clip)

- Best free/open-source option: Wan 2.7 (Apache 2.0, zero cost self-hosted)

- Best for portrait animation: EMO ($0.011/sec, single photo input)

- Best creative control: Wan 2.7 (First+Last Frame, 9-Grid, voice clone, editing)

- Best for long-form video: Kling AI (3 min via extension chaining)

- Best for ChatGPT users: Sora 2 ($20/mo via ChatGPT Plus)

Final Verdict: Should You Use Alibaba AI Video Tools?

Wan 2.7 wins on creative freedom, workflow completeness, and cost. The combination of First+Last Frame control, voice cloning, instruction-based editing, and open-source Apache 2.0 licensing makes it the most flexible AI video platform available in 2026. If you need the highest raw visual quality, Runway or Seedance 2 may be better choices. But for developers, budget-conscious creators, and teams needing maximum creative control without vendor lock-in, Alibaba’s suite is unmatched.

EMO is a game-changer for talking-head content. At $0.011/second, it’s 97% cheaper than HeyGen’s $29/month minimum while delivering comparable quality from a single photo input.

Wan 2.6 remains relevant for API-based workflows where you want multi-shot storytelling without self-hosting, though Wan 2.7 supersedes it for most use cases.

Get Started with Alibaba AI Video Tools Today

Ready to transform your video content workflow? Here’s how to get started:

- For cloud access: Sign up at wan2.video and claim your free credits (~15 credits, no credit card required)

- For open-source: Download Wan 2.7 weights from HuggingFace and run locally on your RTX GPU

- For EMO portrait animation: Create an Alibaba Cloud account with China (Beijing) region access via DashScope API

- For API integration: Use third-party platforms like PiAPI.ai ($0.08/sec), WaveSpeed AI, or invideo

The AI video generation landscape is evolving rapidly, but Alibaba’s combination of open-source commitment, comprehensive tooling, and competitive pricing positions them as a long-term leader. Whether you’re creating social media content, marketing materials, educational videos, or corporate training, there’s an Alibaba AI video tool that fits your workflow. For more insights, see ourBest AI Video Tools for Product Demos in 2026: Showcase, Convert & Sell More. For more insights, see our Best AI Video Tools for Product Demos in 2026: Showcase, Convert & Sell More.

Start experimenting today and discover why thousands of creators are choosing Alibaba’s AI video ecosystem over closed-source competitors.